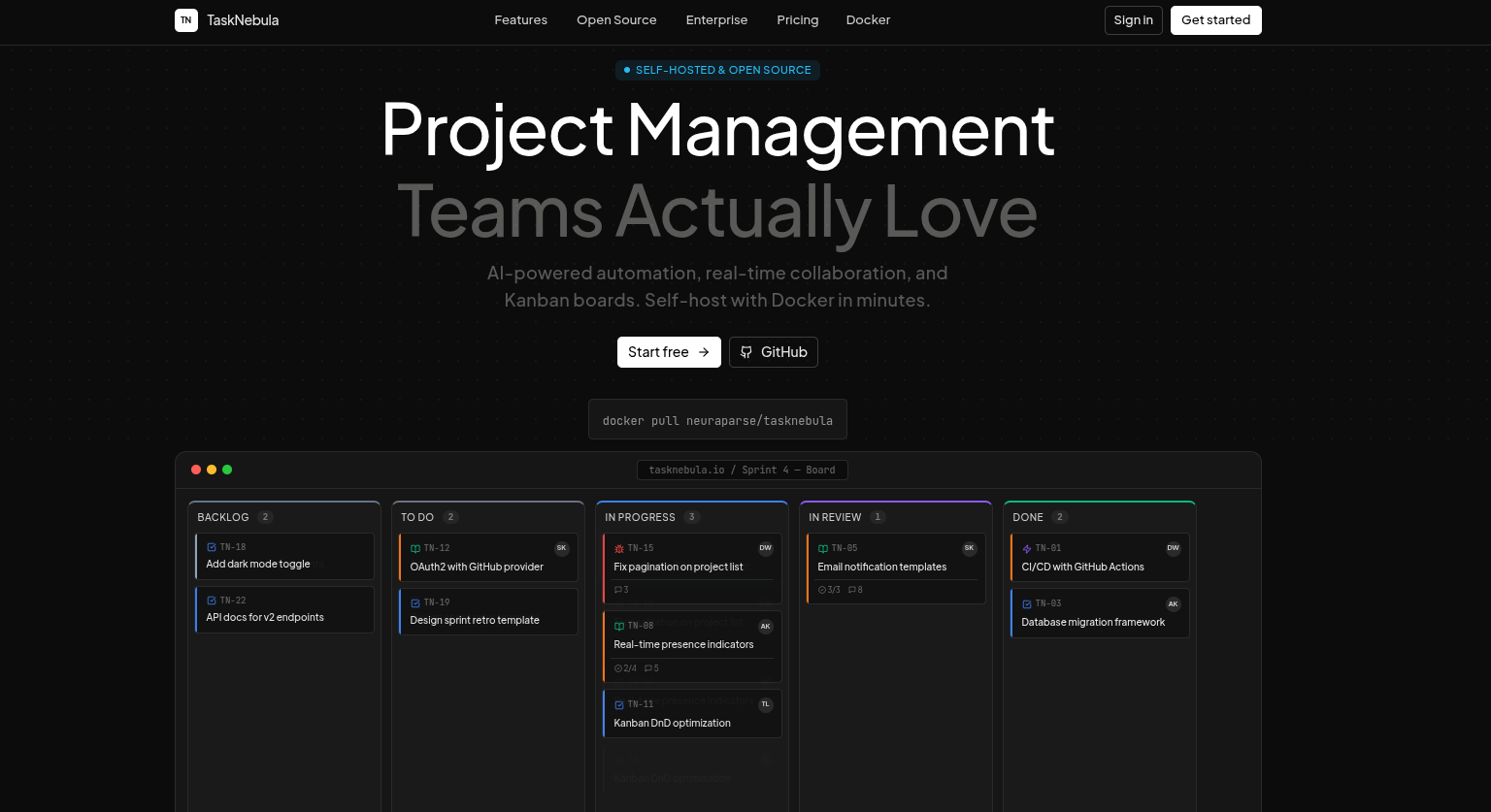

A self-hosted issue tracker that feels like Linear, scales like Jira, and drafts work with you like a pair programmer. Bring your own OpenAI / Anthropic key — or run fully offline with the native planner.

Docker Hub · Quick start · AI · Features · Report a bug

Draft issues from a prompt |

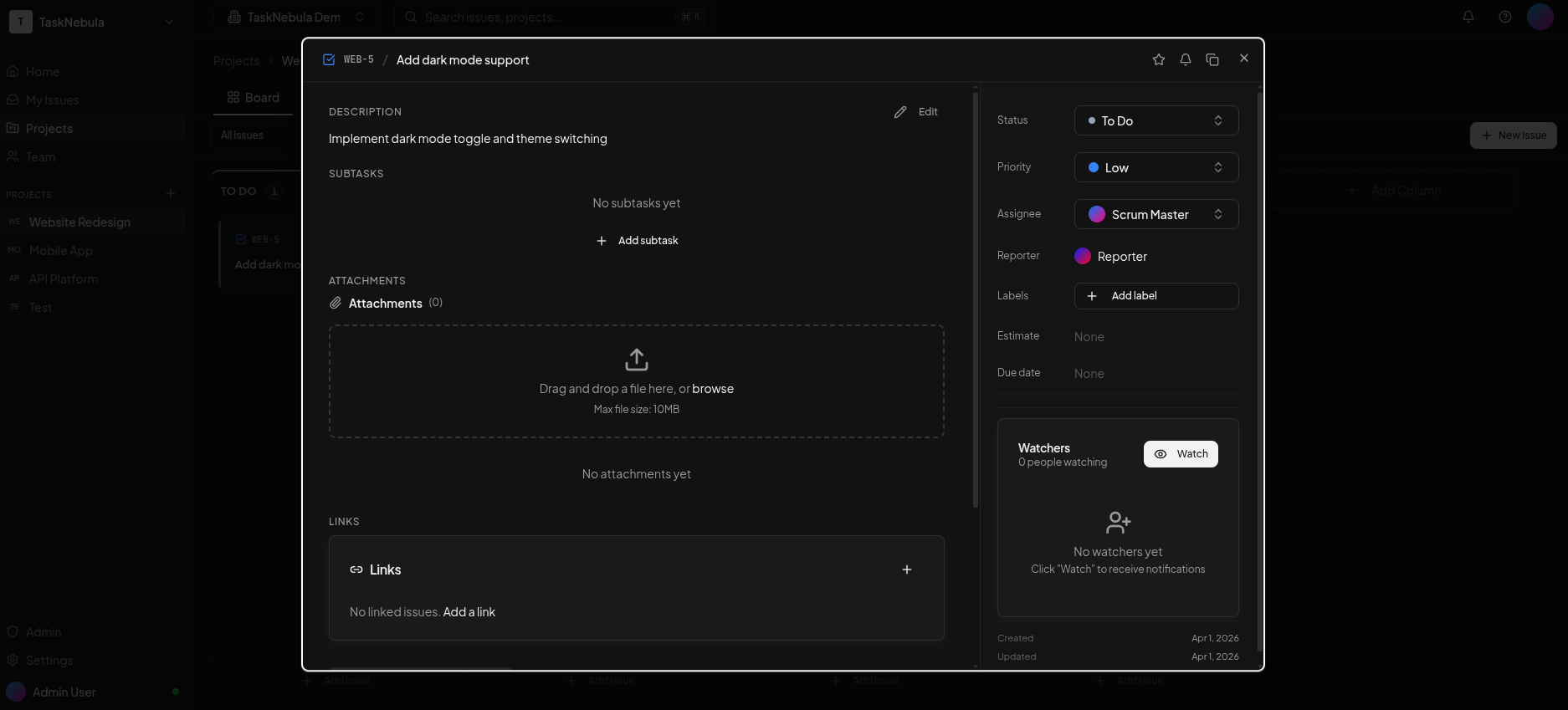

Per-issue AI assist |

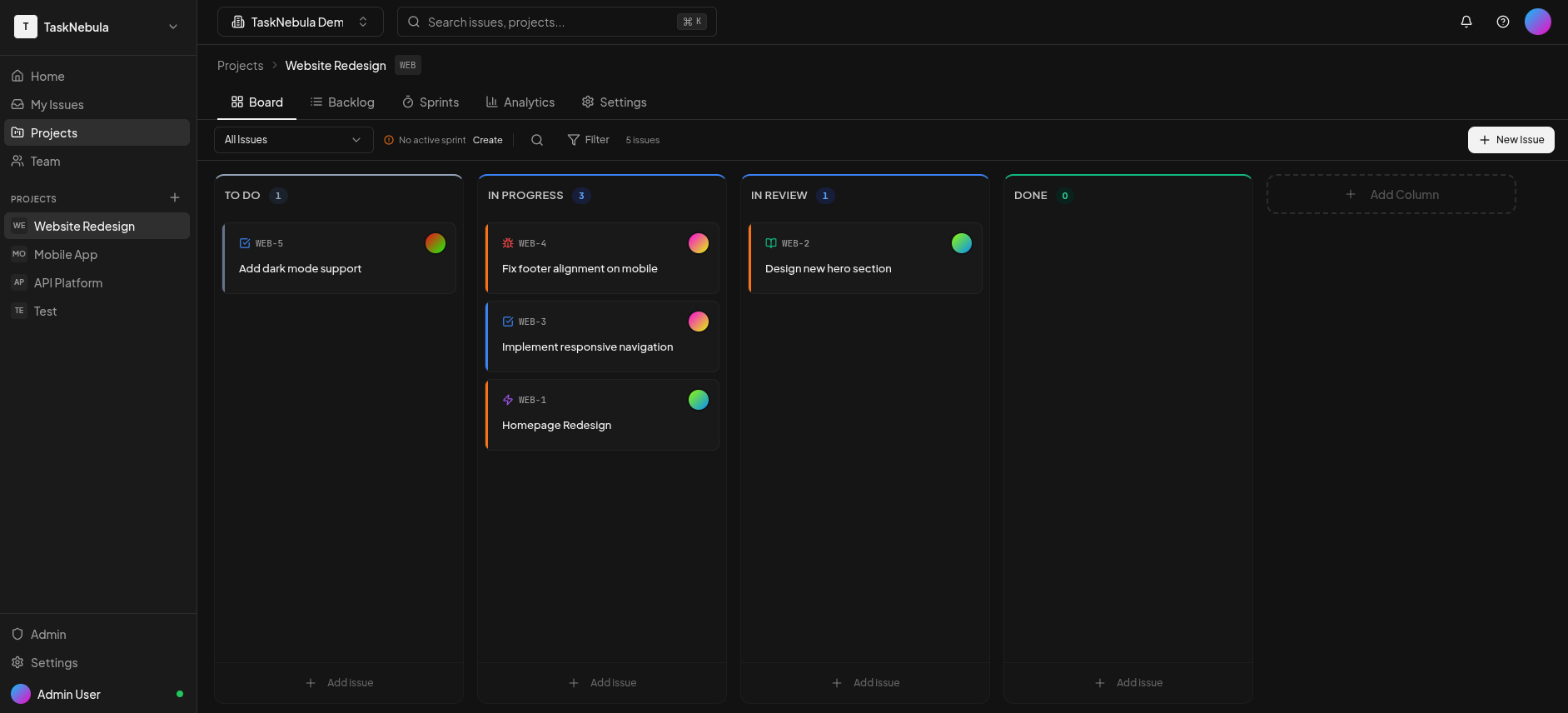

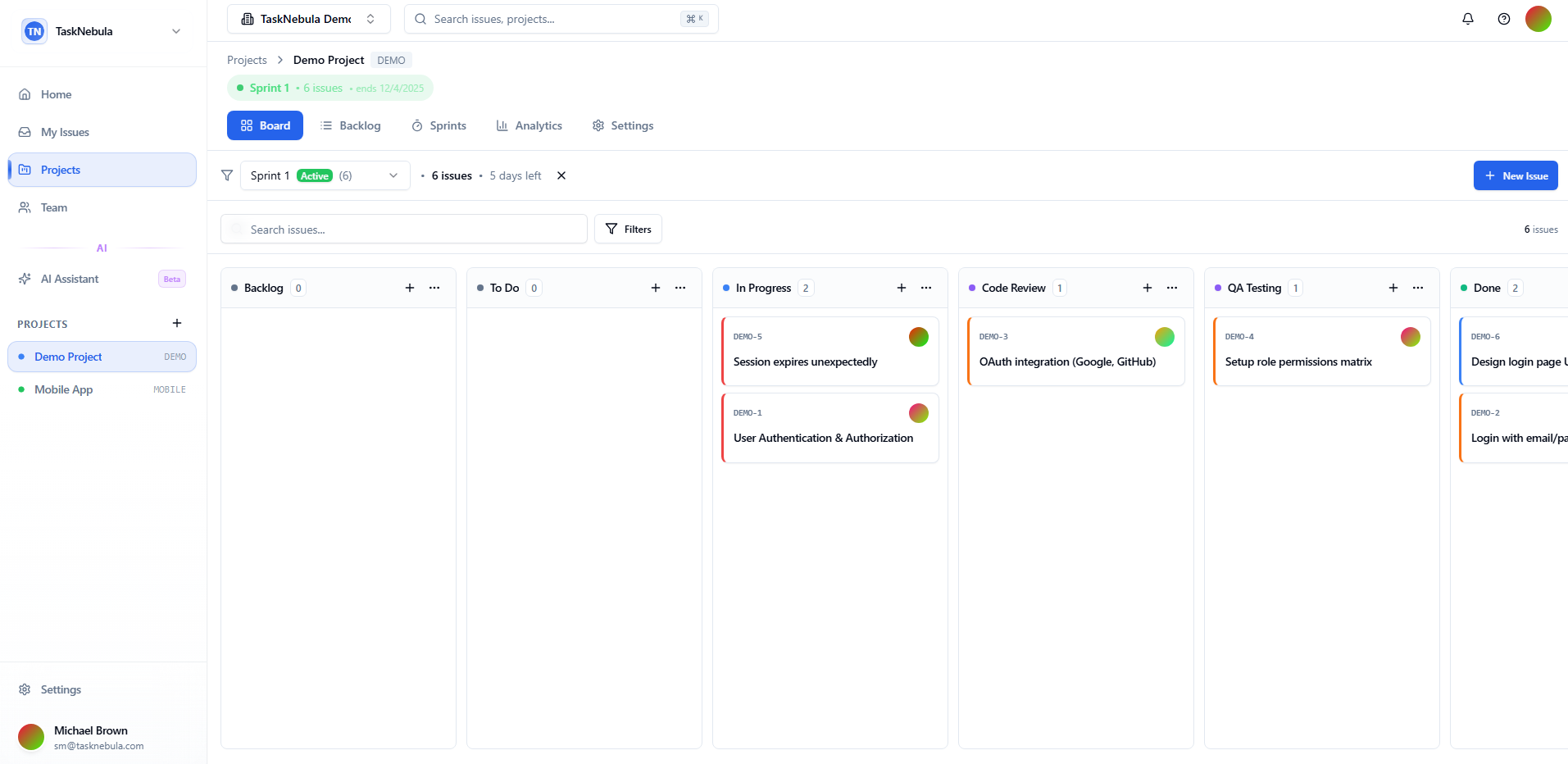

Real-time Kanban board |

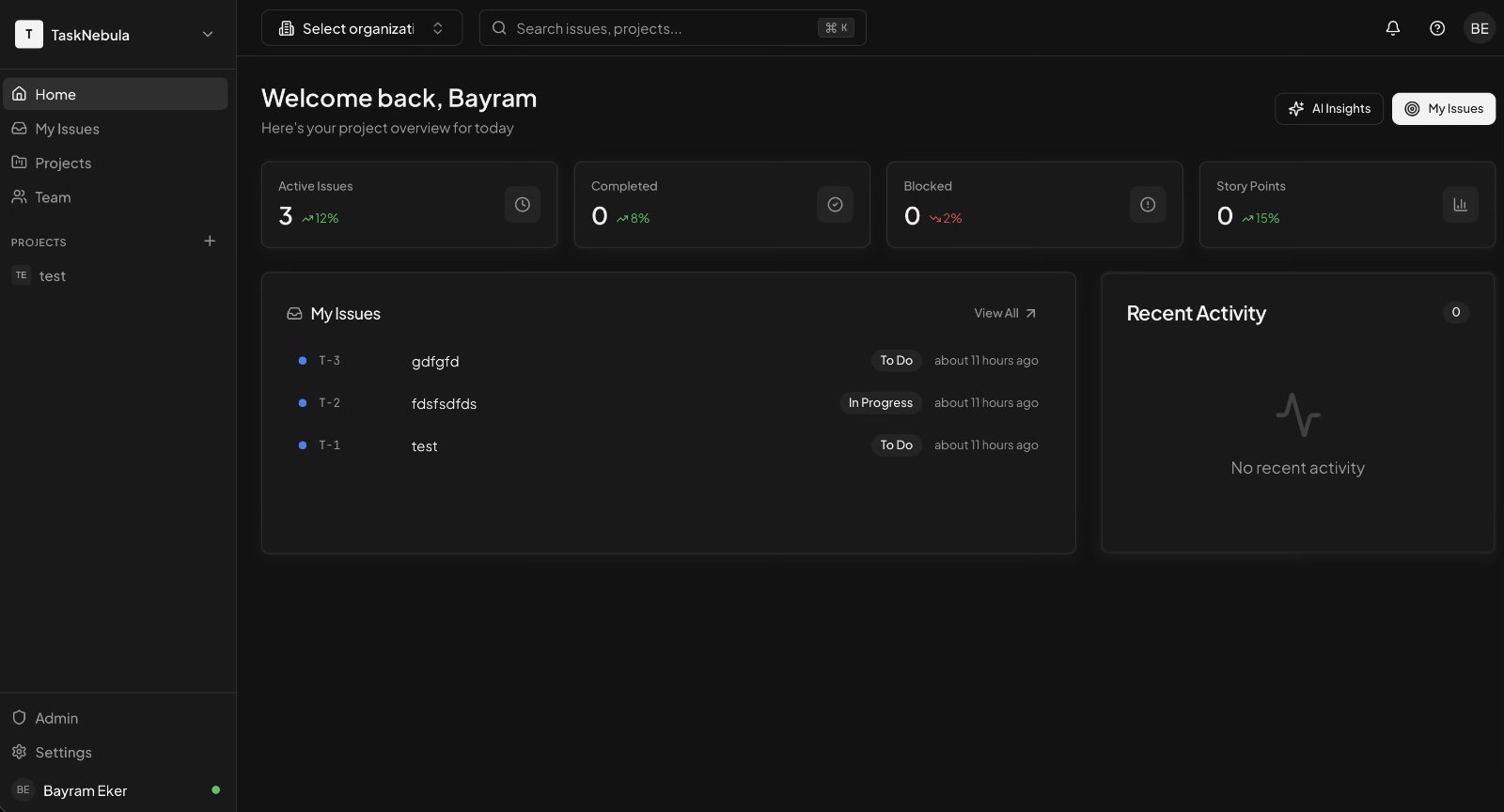

Workspace dashboard |

One curl, then open your browser:

curl -fsSL https://raw.githubusercontent.com/neuraparse/tasknebula/main/scripts/quickstart.sh | bashThe script pulls neuraparse/tasknebula:latest, spins up Postgres + Redis

- LiveKit via Docker Compose, generates a strong

AUTH_SECRET, and opens http://localhost:3000. First-run wizard creates your admin account.

Build from source instead

git clone https://github.com/neuraparse/tasknebula.git

cd tasknebula

cp .env.example .env

echo "AUTH_SECRET=$(openssl rand -base64 32)" >> .env

docker compose up -d --buildPin a specific version

# default is :latest

TASKNEBULA_IMAGE=neuraparse/tasknebula:0.2.4 docker compose up -dRelease notes live on the GitHub releases page.

AI is off by default. Enable it per workspace from Settings → AI & Agents in under 30 seconds. Everything is DB-managed — no env vars to redeploy when you rotate a key.

| Feature | Where | What it does |

|---|---|---|

| Draft-with-AI | Backlog → Draft with AI | Type one prompt, the LLM decides whether it's one ticket or a whole checklist and returns structured, editable drafts. Select which to create in bulk. |

| Per-issue assist | Issue detail sidebar → AI assist | Summarise an issue with its comments, rewrite the description, suggest next steps, or propose labels. One click to Apply — no copy-paste. |

| Native fallback | Built-in | If no LLM credential is configured, TaskNebula still ships a deterministic heuristic planner so the buttons are never dead. |

| Platform keys | Admin → Agent control | Super-admins drop in an OpenAI / Anthropic key that all workspaces fall back to. AES-256-GCM encrypted, redacted previews, audit-logged rotations. |

| Workspace keys | Settings → AI & Agents → Quick setup | Each workspace can override the platform default with its own key. Single Quick-Setup button writes provider + model + key + toggle in one transaction. |

| Model profiles | Settings → AI & Agents → Your model profiles | Save reusable provider+model+tuning combos (temperature, max tokens, reasoning effort) with full revision history. Saved profiles appear inline in the Quick Setup dropdown. |

| Provider | Models out of the box |

|---|---|

| OpenAI | gpt-4o, gpt-4o-mini, gpt-4-turbo, gpt-4, gpt-3.5-turbo, o1, o1-mini, plus the speculative gpt-5.x family |

| Anthropic | claude-opus-4-7, claude-sonnet-4-6, claude-haiku-4-5, plus claude-3-5-sonnet, claude-3-5-haiku, claude-3-opus |

| Native | Built-in heuristic planner (no API calls) |

Every failure — bad key, rate limit, model not available — becomes an

in-app notification with a Sparkles/Bot icon, a one-line action hint

("Open Settings → AI & Agents to add a key"), and a deep-link straight to

the relevant project AI settings. Full audit trail in

Admin → Audit logs.

|

|

|

|

|

|

|

Frontend

|

Backend

|

# .env

APP_URL=https://tasks.yourcompany.com

AUTH_SECRET=your-secret-here # openssl rand -base64 32Put any reverse proxy in front for SSL. Caddy is the easiest:

tasks.yourcompany.com {

reverse_proxy localhost:3000

}See .env.example for the full list — SMTP, OAuth, LiveKit tuning, optional platform LLM keys, etc. Everything AI-related can be configured through the UI after first boot; env vars are only a fallback for dev.

LiveKit ships plain ws:// on port 7880. A production browser loading

TaskNebula over https:// refuses mixed-content wss:// without TLS in

front. Put nginx (or Caddy) on its own subdomain:

- DNS: add

livekit.your-domain.example.com→ same server IP as the app. - nginx: copy the ready template at

nginx/tasknebula-livekit.conf, replace the hostname, then:sudo certbot --nginx -d livekit.your-domain.example.com sudo nginx -t && sudo nginx -s reload - .env:

NEXT_PUBLIC_LIVEKIT_URL=wss://livekit.your-domain.example.com

- Rebuild so the build-time env is baked into the Next bundle:

docker compose up -d --build web

The template terminates TLS, forwards to 127.0.0.1:7880, and keeps the

WebSocket upgrade headers + 24h idle timeout LiveKit needs.

docker compose up -d # Start (pulls :latest)

docker compose down # Stop

docker compose logs -f web # Tail the web service

docker compose pull web && \

docker compose up -d # Update to the newest image

docker compose up -d --build # Rebuild from local source

git pull && docker compose up -d --build # Pull + rebuild

docker-compose.ymldefaults toimage: neuraparse/tasknebula:latest. SetTASKNEBULA_IMAGE=neuraparse/tasknebula:0.2.4in.envto pin.

pnpm install

docker compose up -d postgres redis

cp .env.example .env

cp apps/web/.env.example apps/web/.env.local

cd packages/db && pnpm tsx scripts/migrate.ts && cd ../..

pnpm devRun the full test suite:

pnpm --filter @tasknebula/web exec jestAI-related tests live under apps/web/src/lib/ai/__tests__,

apps/web/src/lib/agents/__tests__, and

apps/web/src/app/api/ai/**/__tests__.

Pull requests welcome. See CONTRIBUTING.md for the branch model, commit conventions, and how to run the linter + tests locally before pushing.

MIT — see LICENSE.

Built by Neura Parse · Powered by open source